Introducing AI Experience Scores

For decades, we’ve relied on CSAT to quantify customer sentiment — translating nuanced support experiences into a single score. It’s directionally useful, but inherently incomplete.

Support leaders are well aware that CSAT reflects a small, self-selecting slice of your customer base. Typically only 5–10% of customers respond. It can also be a shallow measurement with limited insight into “why” a customer was unhappy: was it the product, a policy or the support experience?

And the gap widens with AI.

AI agents now handle a growing share of customer conversations — yet customers tend to evaluate AI interactions more critically than human ones. Traditional metrics don’t fix this. Containment, resolution, deflection, AHT — they answer what happened. They rarely answer how it felt.

“Contained” only means the ticket didn’t escalate. It says nothing about whether the customer left the interaction feeling frustrated or relieved.

Without a better way to measure experience, support teams are scaling AI blind — left waiting for customer complaints to surface. They can't iterate quickly because they don't know what's actually broken. And when leadership asks, "Is our AI improving the customer experience?", the honest answer is often, "We're not entirely sure."

What we built: Automatic experience evaluation for every AI conversation

That’s why we built new way to evaluate the quality of every AI support interaction at scale. We call it the AI Experience Score. AI Experience Scores aren’t designed to throw out CSAT or operational metrics — but to complete the picture.

You can already see what happened — whether the case was resolved, handed off, or abandoned. But now every AI-handled conversation in Assembled is automatically evaluated for experience quality. So you can see how the interaction actually felt to the customer.

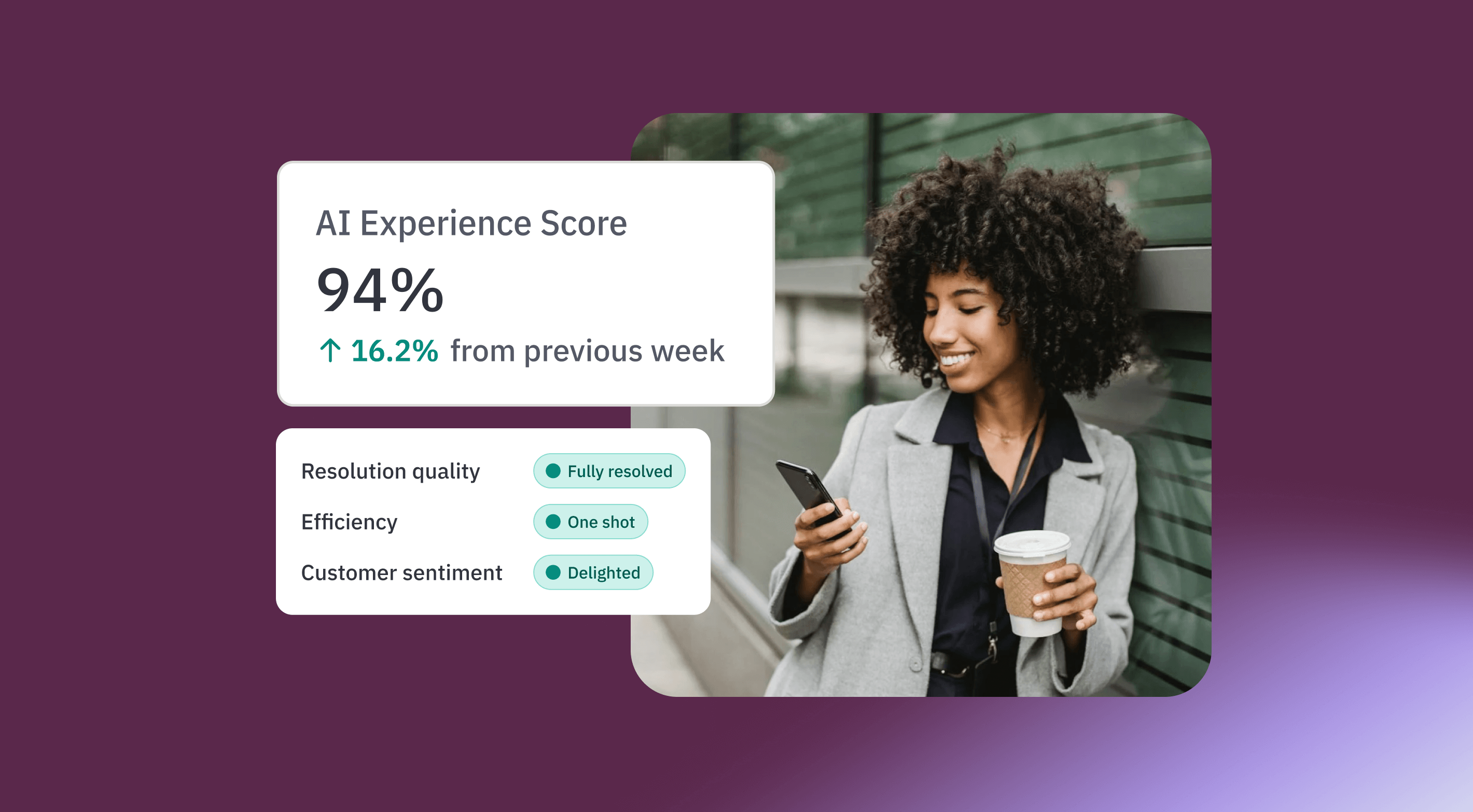

Unlike many proprietary metrics, it’s not a black box. Scores are transparent, auditable, and built to enhance — not replace — the signals you already trust. And unlike CSAT, 100% of conversations are automatically evaluated. This ensures a consistent view of the customer experience at scale alongside traditional metrics like resolution rate.

How it works

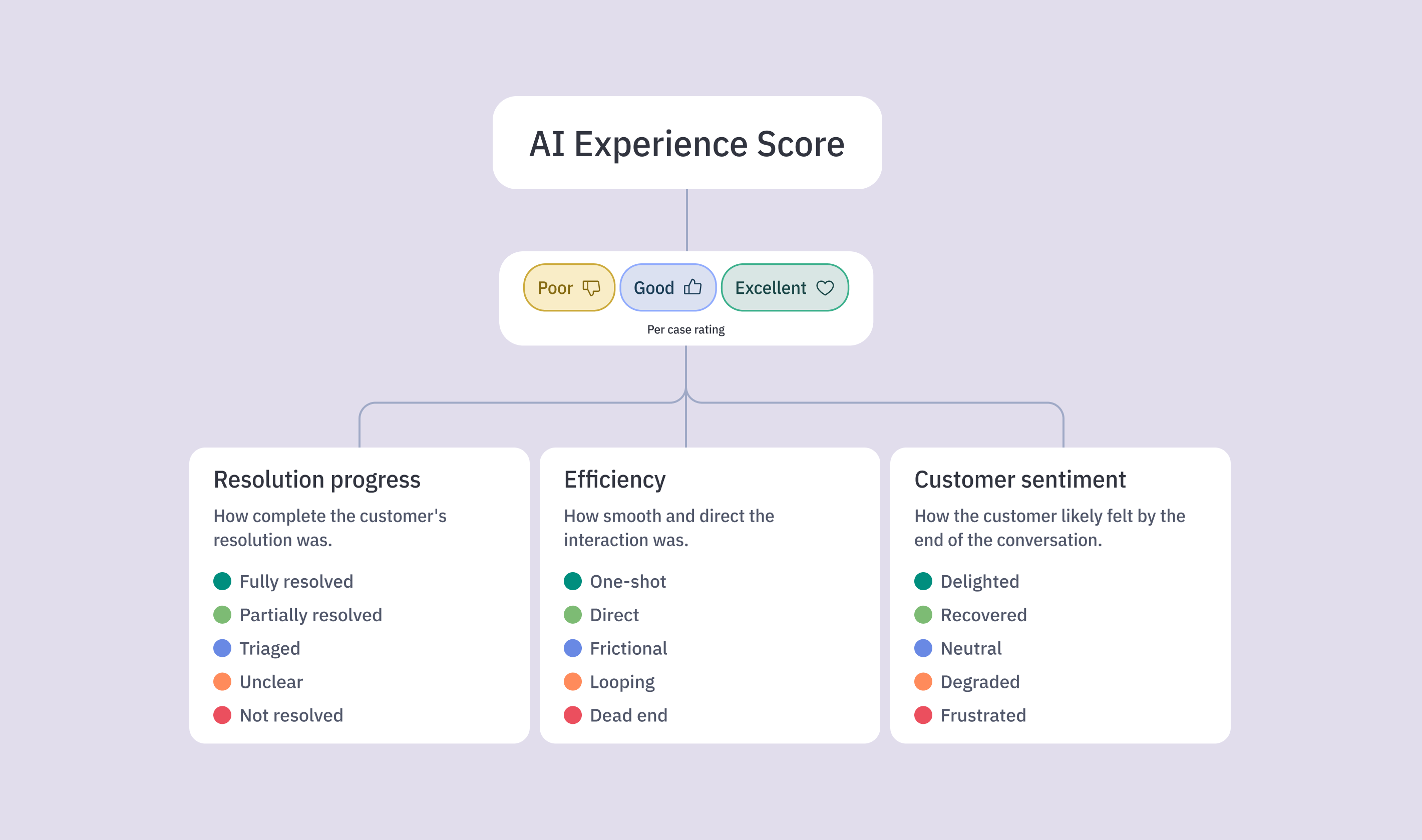

Every AI-handled interaction is given an experience rating of either excellent, good, or poor based on three subcomponents: resolution progress, efficiency and customer sentiment.

- Resolution progress evaluates how far the AI meaningfully moved the customer toward a resolution by assessing intent understanding, relevance of responses, and concrete steps taken toward resolution.

- Efficiency measures how directly and smoothly the customer reached that outcome, identifying friction like looping, excessive clarifications, or broken workflows that make interactions feel harder than they should.

- Customer sentiment tracks the customer’s emotional trajectory throughout the course of the conversation.

Together, these dimensions provide a complete view of whether the AI truly delivered a high-quality customer experience.

Using all of the per-case experience ratings, your overall AI Experience Score is calculated by dividing the number of cases that received an experience rating of good or excellent divided by the total number of cases evaluated — just like how you would typically determine CSAT.

Transparent by design

We believe experience measurement should be explainable. Your AI Experience Score is not a black box.

For every conversation, you can:

- Drill into the individual case

- See each sub-score

- Understand the rationale behind the rating

- Review transcripts alongside the evaluation

And you can still:

- Use CSAT from your helpdesk

- Track containment and resolution metrics

- Perform manual QA

- Define your own quality standards

AI Experience Scores work alongside your existing evaluation framework — not instead of it.

We’re comfortable putting our scoring next to your own metrics because you should never have to outsource your quality standards to a single vendor.

Built for action, not just insight

Traditional metrics tell you what your AI did. AI Experience Score tells you what your customers felt. Looking at both together unlocks a new level of insight:

If AI resolves many cases but AI Experience Scores are poor, your system may be technically successful — but creating friction. You should review your prompts and conversation design before customers get frustrated at scale.

If AI Experience Scores are strong but many conversations are handed off, the experience is solid — but the AI lacks capability. You should expand knowledge or agentic action coverage.

If AI is going to represent your brand in thousands of conversations every day, you need the ability to continuously improve those experiences.

Get started

AI Experience Scores are available now to all Assembled customers using AI support agents.

Want to see it in action? Chat with our team to see how AI Experience Scores or other Assembled QA and monitoring features work with your specific operational needs.