AI at Assembled: An empirical comparison of LLM evaluation metrics in a customer support setting

The importance of quality

Several months ago, Assembled piloted a large language model (LLM) product for customer support agents with some of its closest customers. As demand for Assembled Assist — known internally as Cal — has grown, our team built out several versions of systems to evaluate and benchmark the quality of its responses. Measuring quality for us is critical for three reasons:

- Preventing regressions: We need to ensure that changes to our AI systems do not suddenly result in lower-quality answers to our customers (this includes ourselves — Assembled’s own customer support team dogfoods Cal).

- Understanding customer or structural deficiencies: It’s possible that certain parts of our AI system perform differentially, or that certain types of customers may have varying experiences.

- Measuring and prioritizing improvements: For example, let’s say Reciprocal Rank Fusion (RRF) causes a slight increase in end-to-end latency. We want to be able to measure the improvement that RRF brings us to make an educated decision as to whether the increased performance is worth the marginal cost.

Agent architecture

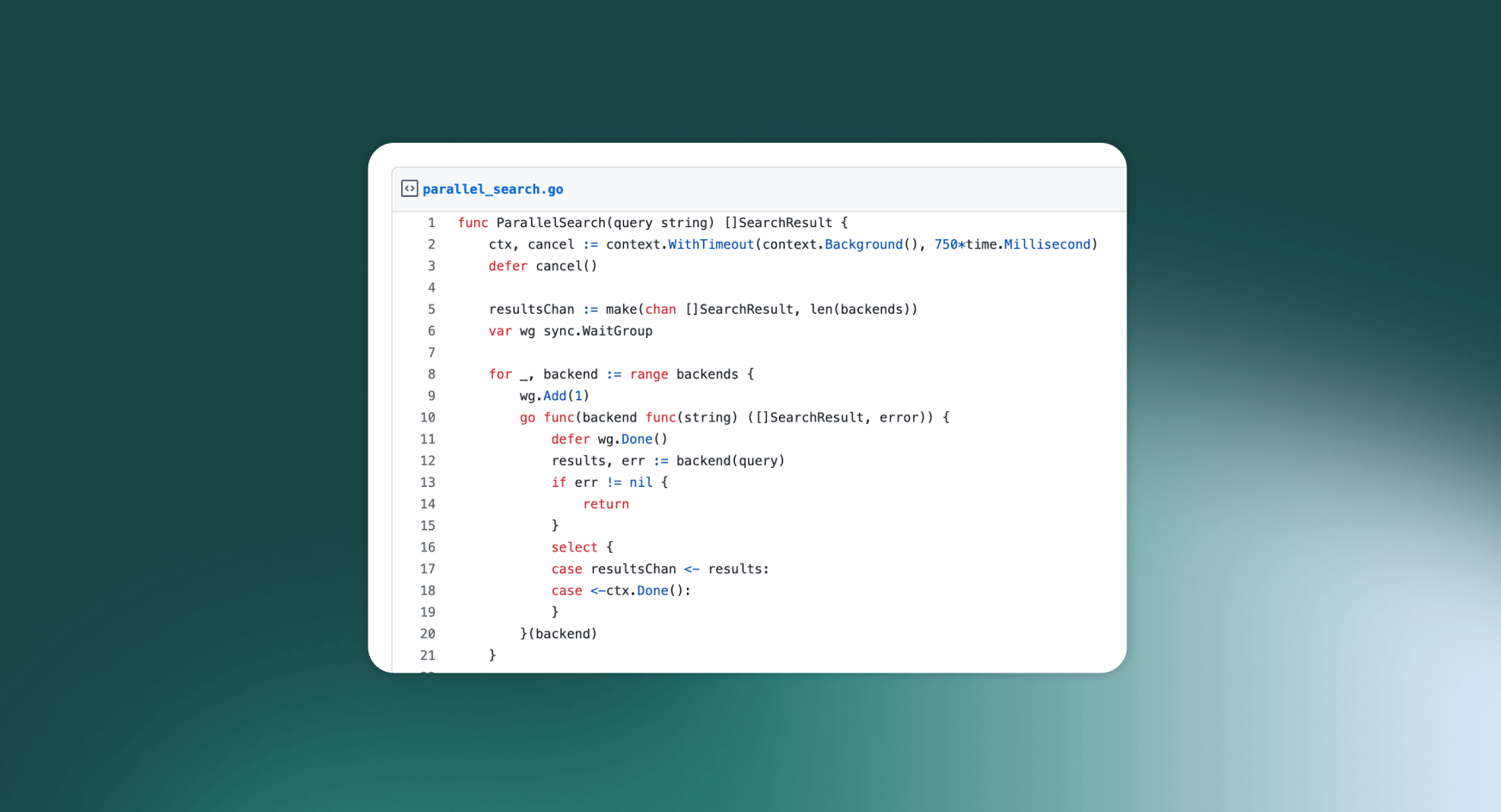

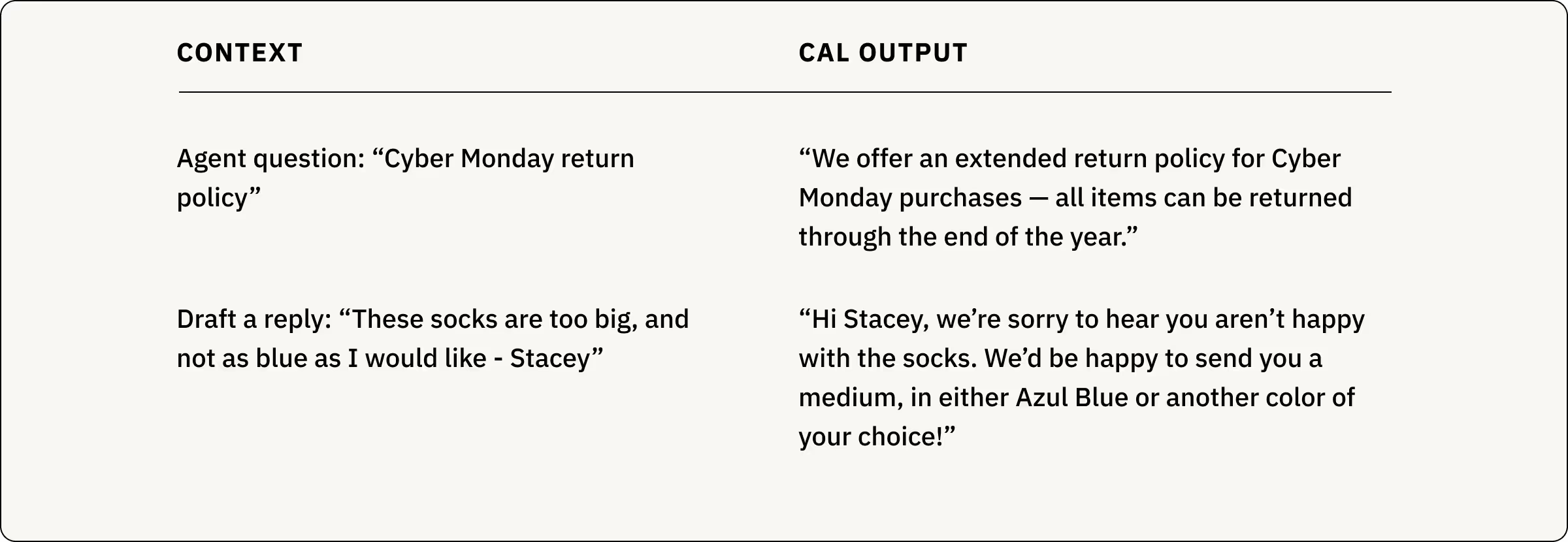

Cal is an LLM-based assistant that supercharges customer support agents by providing them with the right answer at the right time. Given some context of a conversation, Cal pulls in relevant information for agents and drafts a reply they can use in their conversations with customers.

Here are some examples of stylized Cal context → output interactions:

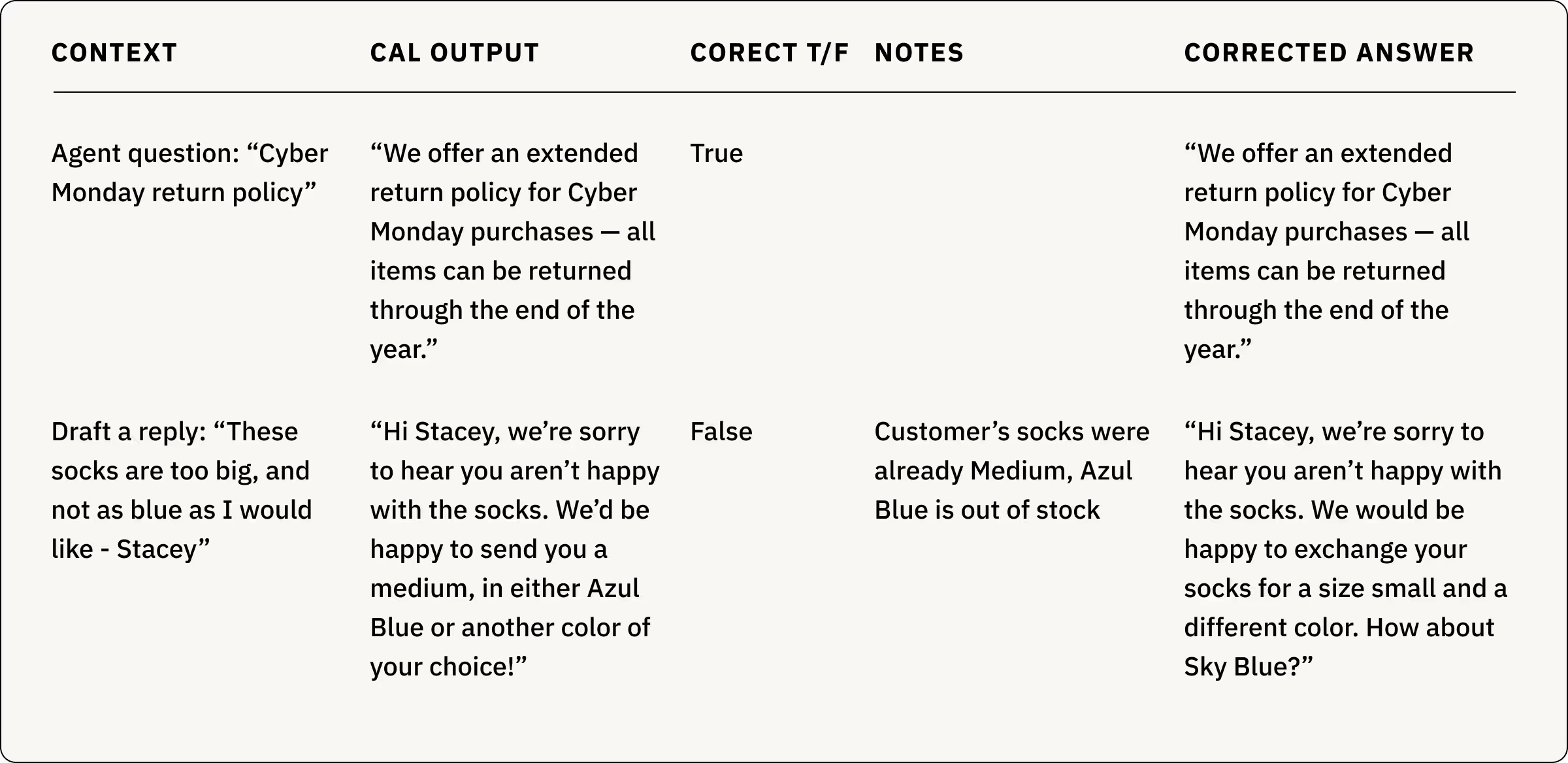

To create an evaluations dataset, we manually score each Cal interaction. We want to ensure that Cal’s tone is aligned with the company’s tone and that the answers are accurate.

Here is the same dataset as above, but now scored by a human for quality, and also a corrected answer. If Cal’s answer is correct, we simply copy it to the Correct Answer column.

For this example dataset, we would deem Cal’s accuracy to be 50% (real life accuracy is much higher — this is just an example!).

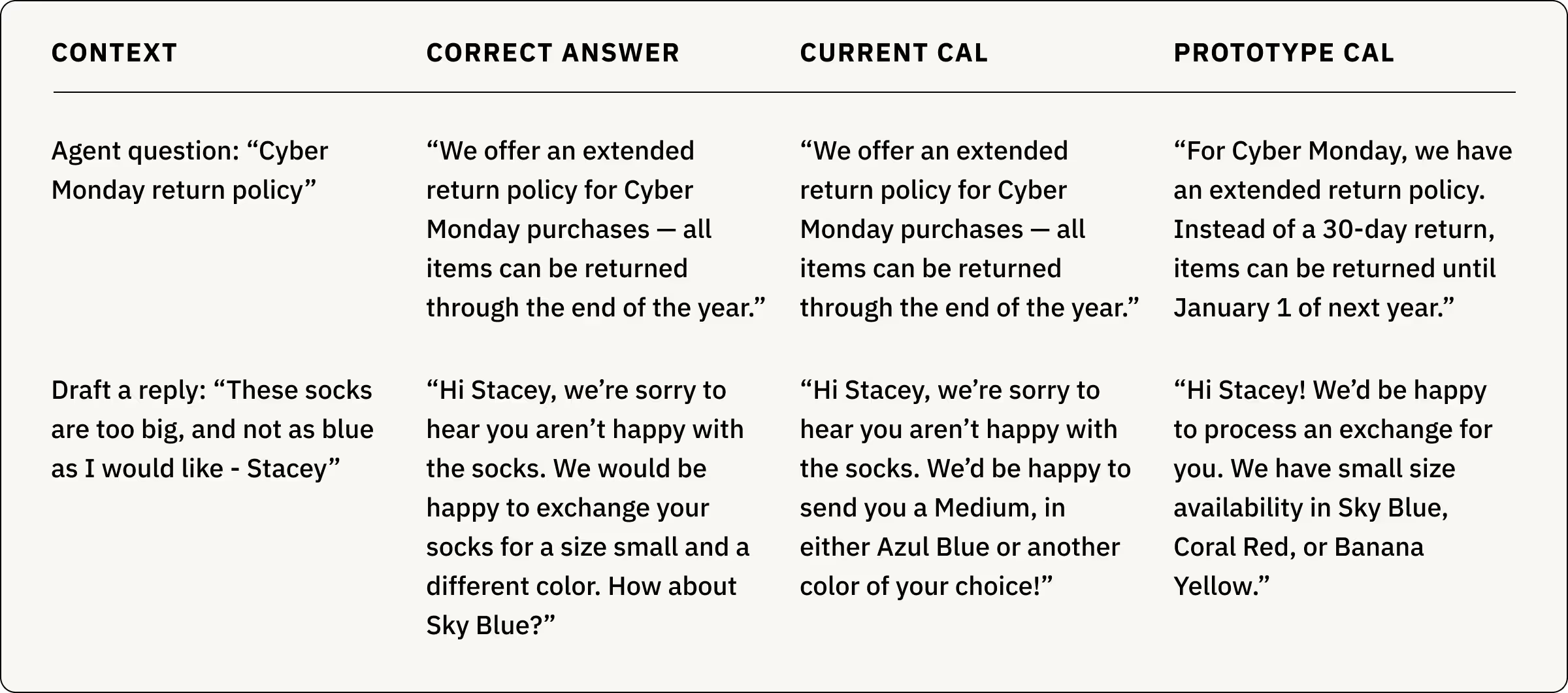

Let’s say we update Cal to better understand inventory data in an attempt to get that sock return question right. How do we make sure the new answer is better and that overall performance doesn’t decrease? We can compare the new, prototype Cal vs. current Cal for both questions:

An astute reader might observe that Prototype Cal’s reply for the first question is semantically different than Current Cal, but factually congruent. For the second question, Prototype Cal captures the gist of the correct answer in a way that Current Cal didn’t — but the wording isn’t going to be exactly the same as our human-generated correct answer.

Besides reading each answer and manually scoring it, how do we assess if Prototype Cal, on average, performs better than Current Cal, for the hundreds of evaluation cases we have?

Automating evaluations

There are a plethora of automated LLM-evaluation systems, but there’s limited literature on the relative performance between evaluation types. We want to make sure that any evaluation system we use correctly encapsulates how we think about quality with Cal. For example, tone is very important to us. The right message conveyed with the wrong tone is not acceptable to equip customer support agents with.

So, to understand if we can trust automated evaluation techniques, we must evaluate them!

For the purposes of this post, we’ll compare six evaluation methods of varying complexity:

- Levenshtein Distance: A common type of edit distance that counts how many text edits need to be performed to make two texts equal.

- BLEU (”BiLingual Evaluation Understudy”): A metric focused on machine translation that compares n-grams between the candidate and reference texts.

- ROUGE (”Recall-Oriented Understudy for Gisting Evaluation”): A recall-focused evaluation that also utilizes n-gram overlap. We use two variants: Rouge-2, which uses bigrams, and Rouge-L, which uses longest common subsequence.

- BERTScore: Cosine similarity using pre-trained contextual embeddings from BERT.

- Ragas metrics: An open-source LLM evaluation suite that supports several metrics. We use their Answer Correctness metric, which is a weighted mean of semantic and factual similarity with the provided ground-truth answer. We’ll use their default weights, which puts an equal balance on semantic and factual similarity.

- Custom LLM Prompt: We also developed our own LLM-based evaluation that compares a given Correct Answer and Proposed Answer, and returns true if they are equivalent answers.

While our hypothesis is that evaluation metrics leveraging embeddings and LLMs would outperform simpler methods, we also need to balance the cost, latency, and interpretability of the different evaluation methods. There is also some evidence finding biases in LLMs-as-evaluators, so we need to be very careful with blindly automating our evaluation metrics.

Methodology

To evaluate the evaluation metrics, we take the evaluations dataset described previously, and, for each question, calculate the evaluation metric on the correct answer vs. Cal’s answer:

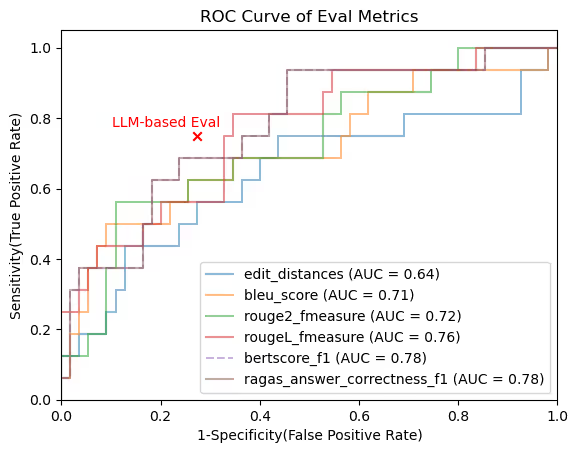

A good evaluation metric is one in which the scores are highly predictive of whether the Cal answer is “correct”; if the score is low, Cal’s answer should be wrong, and if the score is high, Cal’s answer should be correct. To quantify this predictiveness, we use Receiver Operator Characteristic (ROC) Curves, a standard technique for evaluating binary classifiers, and the corresponding summary metric, Area Under the Curve (AUC). This system allows us to compare scores even with very different scales (for example, Edit Distance vs. BLEU Score).*

*Two notes on methodology here: First, our custom LLM prompt evaluation only returns True (”The candidate and reference provide the same answer”) or False, not a metric score, so is not amenable to a full ROC Curve. We’ll simply plot its performance as a single point on the ROC curve. Second, many of the quantitative scores output per-answer recall, precision, and f1 measures. To keep this comparison simple, we use the f1 score for each evaluation metric.

Results and learnings

Here, we plot the results of our meta-evaluation on a dataset of 40 annotated evaluations and describe our key learnings.

Our main finding validates our intuition before running the meta-evaluation: More sophisticated evaluations that leverage embeddings (LLM Eval, Ragas, BERTScore) perform better than n-gram-based metrics (Rouge, Bleu). Simple edit distance performed the worst. Fascinatingly, our home-grown LLM Eval dominates the quantitative metrics, with the downside that it cannot produce calibrated probability estimates.

Second, while these scores aren’t amazing, they do suggest that, in aggregate, we are able to compare versions of Cal against one other in an automated fashion. If a new version of Cal has a significantly higher metrics score than our current version, we can feel confident that, on average, accuracy will be improved. Automating these evaluations will save us thousands of hours that we can spend on building new feature sets or launching with new customers.

Finally, the relatively high performance of the LLM Evaluation is especially exciting and leads to some conversations about the relative cost vs. performance of this method. For example, we could consider the relative performance of automatically scoring 2,000 evaluations with a cheaper method as opposed to a smaller subset through an LLM-based Eval. That said, none of the models presented here are near perfect, and we’ll continue to build on this first system through additional development and tweaking. We also aren’t afraid to get our hands dirty and manually assess quality when making large changes to our system or tackling a new type of problem set.