What is agentic workforce management?

A real-time analyst opens a dashboard, spots an adherence miss, messages the agent. A planner pulls historical volumes to build next quarter's staffing plan. A scheduler opens a spreadsheet to balance coverage against fairness rules. This is how WFM has worked for 30 years.

Each of those steps requires a human to notice something before anything happens. The system is reactive by design.

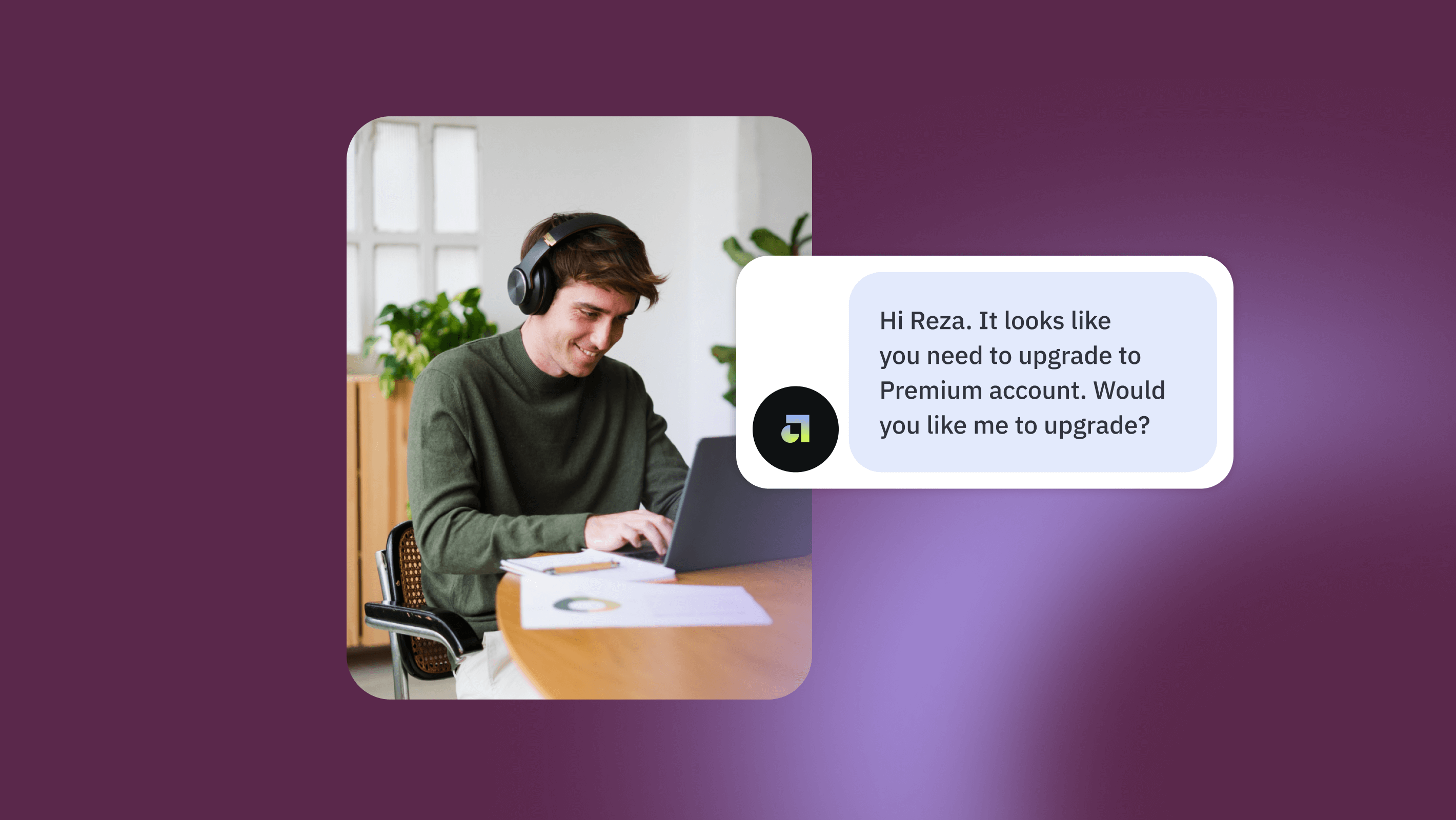

Agentic workforce management changes the underlying model. When WFM data, AI, and automated actions are connected, the system monitors continuously, surfaces what matters, and acts on routine tasks before anyone has to ask. The WFM platform becomes background infrastructure. The conversation with AI becomes the front end.

What does "agentic" mean in WFM?

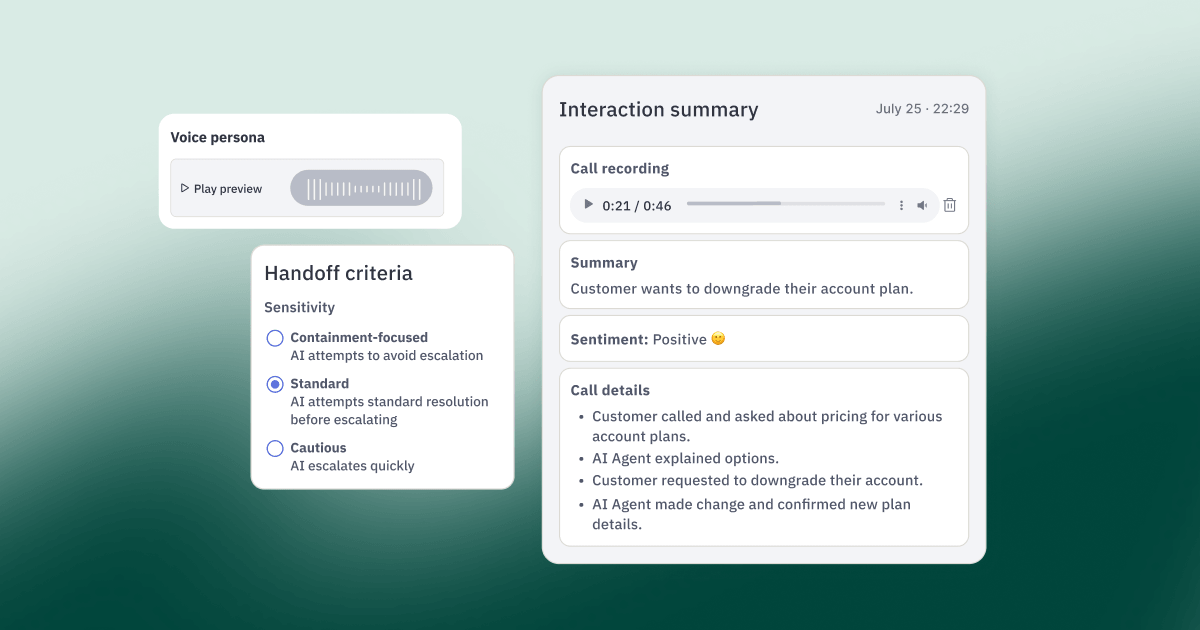

Agentic WFM means AI agents handle routine operational work without waiting for a human to initiate each task. Monitoring adherence, generating reports, flagging coverage gaps, publishing OT slots: the system does these things on its own. The WFM professional engages when judgment is required.

The contrast with where most teams are today is useful. Right now, most WFM teams use AI the way they use Google: open a tab, type a question, get an answer, close the tab. The workflow doesn't change. You're just faster at individual tasks.

Agentic WFM is different. It's Tuesday morning. You'd normally spend an hour piecing together your daily readout. Instead, you ask: "How did we perform yesterday across voice and chat, what drove the misses, and what's the risk for today?" Claude pulls SLA from your WFM platform, ticket volume from your helpdesk, and agent availability from your HRIS. It surfaces the two queues that missed and why. It flags that today's forecast looks light given current call patterns. Then you say: "Draft a Slack post for the team and put 4 hours of OT on the table for the chat queue." It writes the message, publishes the OT slot, posts to Slack. You never opened a single tool.

That's the end state. Most teams aren't there yet, but the path is clearer than it's ever been.

Where are most WFM teams today?

The adoption curve breaks into three phases, and most teams are somewhere between the first two.

Phase 1: Asking questions. AI is a smarter search engine. You type a question, get an answer, close the tab. Your week looks the same as it did before.

Phase 2: Automating tasks. You've moved past one-off questions. You've built a prompt that generates your Monday report the way you like it. Maybe a script that pulls yesterday's adherence data and posts a summary in Slack before standup. You're running workflows, not just asking questions.

Phase 3: Operating agentically. Every tool your team touches is connected to a single AI platform. Agents run on your behalf across WFM, your helpdesk, Slack, and BI. You're not opening dashboards anymore. You're governing a system that does the work and surfaces what needs your call.

According to McKinsey's 2025 State of AI Report, 62% of organizations are in early stages, experimenting with agents but not yet scaled. The gap between "I built a cool prompt" and "this is how my team works now" is where almost everyone is sitting right now.

That gap is the whole game. Teams that close it in the next 12 months build an operational advantage that's hard to catch.

What does agentic WFM look like in practice?

The clearest picture comes from teams already running it in production.

One of our customers runs a contact center supporting a marketplace of service businesses. They built an AI system that continuously monitors agent adherence. When an agent goes over on lunch, the system detects it, adjusts the schedule, and messages the agent directly. No human watching a dashboard, no manual intervention required. The scanning, spotting, messaging, and schedule adjustment that an RTA would normally do manually happens without a human in the loop. They're saving FTE because of it. This isn't a pilot. It's production.

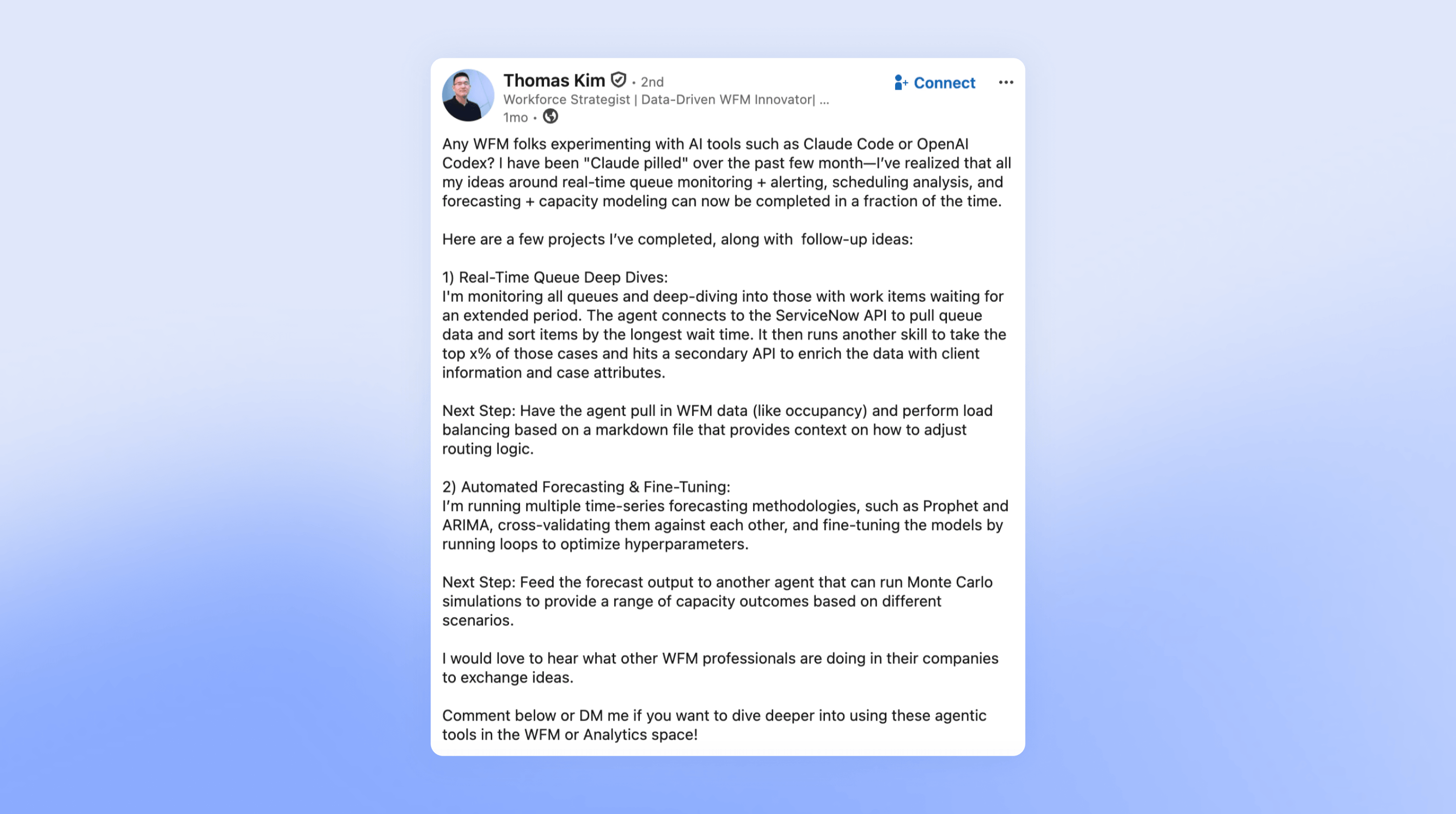

Thomas Kim at DraftKings built 19 Claude Skills that his entire WFM team uses. In traditional WFM, most people work out of a Google spreadsheet and run one scenario. With the skills library, his team runs 100 different scenarios. When Thomas is out, the skills still run. When a new analyst joins, they have the same tools on day one. That's the shift from "I use AI at work" to "we operate with AI."

Both of these examples share the same underlying structure: someone owned the infrastructure, standardized the output, and built a system rather than a personal workflow. That's what separates a personal workflow from how the team actually works.

How do you go from experimenting with AI to running it as a team?

Most AI adoption in WFM is happening bottom-up. One analyst discovers Claude, builds something useful, and uses it personally. But there's often zero organizational infrastructure around it. No one owns the connections. No one decides which workflows get standardized. When that analyst goes on vacation, the workflow breaks. When they leave, the knowledge walks out with them.

Three things close the gap between a personal workflow and how the whole team works.

1. Own it. Dedicate someone to manage the AI infrastructure for WFM — the tool connections, the skill library, the decisions about what gets standardized versus what stays experimental. This is the WFM equivalent of owning the tech stack.

2. Upskill the team. Not everyone needs to build agents from scratch. But every RTA, planner, and scheduler needs to know how to use the LLM tool, interpret the output, and know when to question it.

3. Standardize the output. Bake your team's standards into packaged skills: which metrics matter, what thresholds trigger concern, what format leadership expects. The output should be consistent no matter who runs it. When Thomas Kim at DraftKings built his skill library, new analysts joined with the same tools on day one. That consistency is what turns a personal workflow into a team capability.

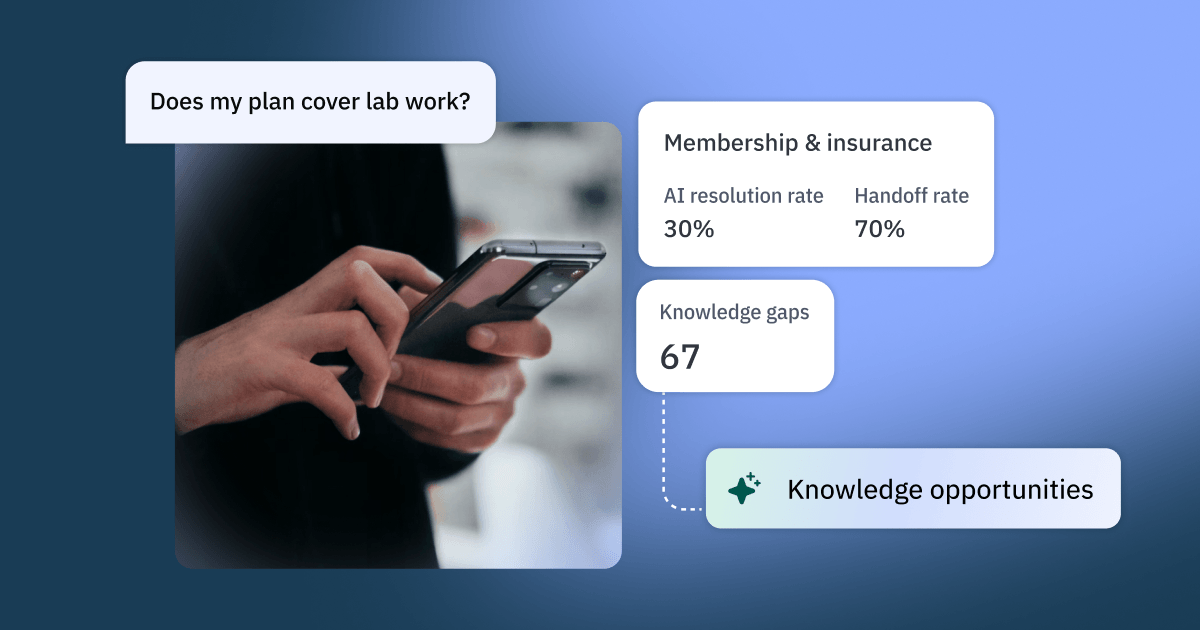

For teams already on Assembled WFM, the MCP connector is the practical bridge from file-upload experiments to live agentic analysis. Ask "which queues need attention this week?" without an MCP connection and Claude answers based on what's in your export. Ask the same question with Assembled connected and Claude cross-references adherence, breaks down by channel, surfaces the trend, and recommends an action, all against live data. Same question. Dramatically different answer.

What changes about the WFM role?

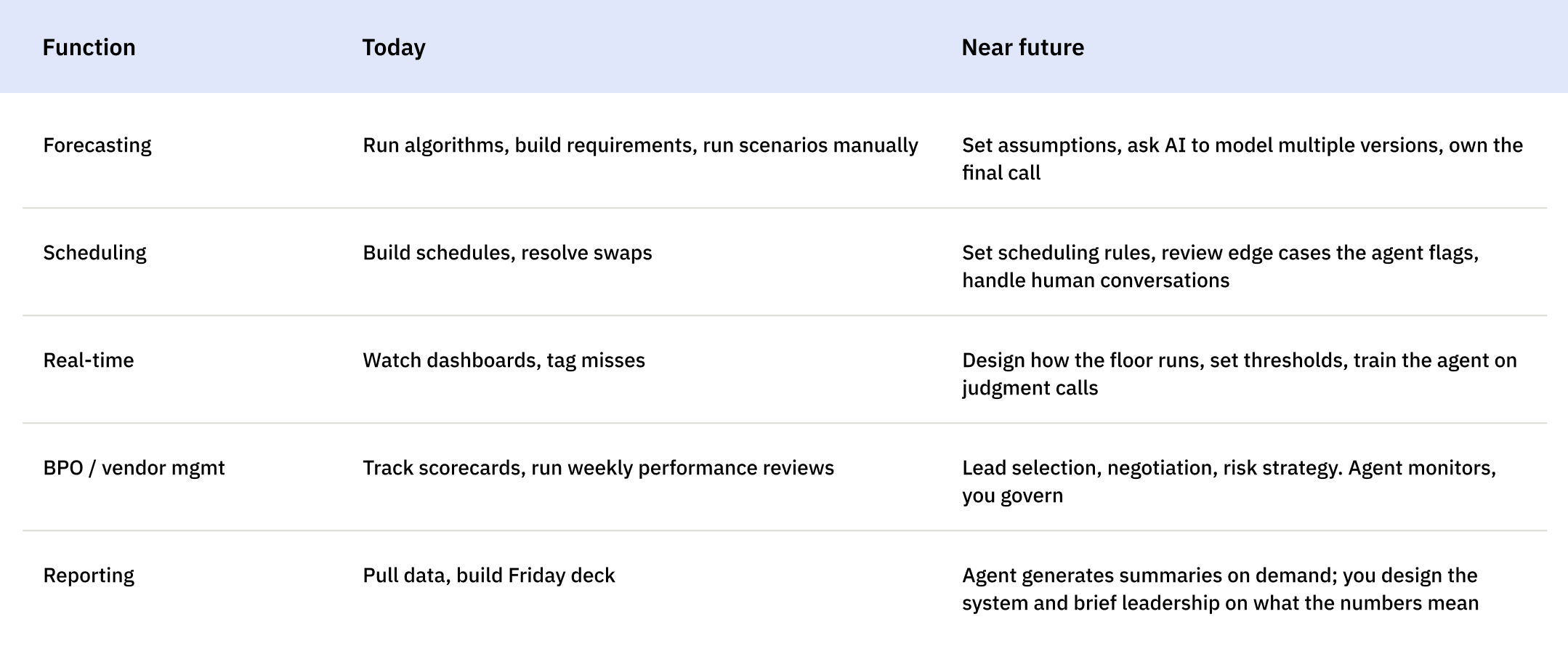

This is the question everyone is actually asking. The honest answer is: the work changes shape, but the function doesn't disappear. The pattern across every WFM role is the same: execution gets automated, judgment moves up.

The bottom rungs of each function — the routine monitoring, the report generation, the standard adjustments — get absorbed by automation. What remains is harder to automate and more valuable: orchestration, judgment, and accountability.

The career trajectory for WFM professionals who adapt is actually upward. BCG's analysis of AI's effect on work found that more roles will be reshaped than replaced, and that the roles that survive will demand greater expertise, more accountability, and a higher premium on judgment. The roles that survive are harder, not easier. They pay more, not less.

The domain knowledge that takes a decade to build is the part AI can't replicate. An LLM told to "optimize staffing" recommends 95% occupancy because the math is efficient. Anyone with real WFM experience knows that's a burnout factory that spikes attrition within six weeks. An AI flags an adherence miss without knowing the agent was pulled into an emergency escalation by a supervisor — and generates a coaching write-up for someone doing the right thing. A model forecasts from last year's pattern without knowing about a product launch next week that will double contacts.

AI is powerful, but it can also be confidently wrong without the right WFM judgment behind it. The domain expertise that takes a decade to build — knowing what the data means before it tells you, knowing which tradeoffs are actually on the table — is exactly what keeps agentic systems from automating mistakes faster.

The WFM leaders who win the next decade will have two things: the domain expertise to set the right guardrails, and the AI fluency to build and govern the systems that run within them. The second one is learnable. Everyone can be doing it within 90 days.

Now is the right time to start

The experiments happening across WFM teams right now — the personal Claude prompts, the side scripts, the "let me try something" workflows — are real. They're also fragile. They depend on one person knowing how to prompt correctly, and they don't survive vacation.

The teams that operationalize in the next 12 months get a structural advantage that's hard for late movers to close. The tools, the data connections, and the framework all exist today.

Nobody in your company is better positioned to build this than the WFM team. You know the queues. You know the forecast. You know what good looks like before the data tells you. Engineers can't build the right agents without you. Executives can't govern them without you.

The tools exist. The data connections exist. The only thing that's missing is someone on your team deciding to own it.