What we learned by putting our voice AI into our own support queue

When Assembled put its own voice AI into its own support queue, the rollout made something clear that a lot of support teams are still learning the hard way: AI does not fail or succeed on the strength of the model alone. It rises or falls on the system around it: the scope, the handoffs, the knowledge, and the people responsible for improving it once it goes live.

That can be easy to miss in a market that still talks about AI like a launch event. But once AI starts handling real customer conversations, the question is no longer whether it works. The question is whether the operation around it is designed well enough to make it useful.

With AI voice agents, that question just gets harder to avoid.

A call center lesson that stuck

When Caitlyn Aguirre started her first call center job, she almost didn’t make it through new-hire training.

It wasn’t because she didn’t know the material. She had studied the product manuals, the policy docs, the troubleshooting steps. She knew the work. What rattled her was the phone.

On a call, everything is immediate. You can’t quietly reread the customer’s question. You can’t buy yourself time by tidying up a sentence before you hit send. You don’t have body language to help carry the interaction. The phone rings, and you have to respond to a real person with a real problem in real time.

Eventually, she answered a call and solved the customer’s issue. But she was so focused on getting through the interaction that she failed to document the call, a basic requirement of the job.

It was an early lesson in how unforgiving voice can be, and how much the system around the interaction has to do to keep it on track.

Today, Caitlyn leads Support at Assembled. So when the team decided to put Assembled’s own AI voice agent into its own support queue, she wasn’t thinking first about novelty. She was thinking about what voice demands from the system around the agent: clear guidance, the right context, well-defined handoffs, and as little room for confusion as possible.

The real work was designing the experience

In one sense, the rollout was refreshingly straightforward.

“Of all the telephony implementations I’ve had to do, this one was very plug and play,” Caitlyn said. Part of what made that possible was that the support team could build and manage the rollout largely on its own, without a heavy engineering lift or outside implementation support. They had their first agentic workflows live within a few weeks.

But even if the setup was relatively easy, the operational design around it wasn’t. As Caitlyn had learned in a previous role, it is entirely possible to “succeed” at an AI rollout on paper and still damage the operation underneath. In that case, leadership had pushed hard for automation of first-touch support. The team delivered it. Quality held. The numbers looked good. But the wider flow of work became harder to manage because the AI layer had been inserted without enough thought about what happened after that first response.

“I got so overly focused on automating the first touch,” she said. “But I did not zoom out at any point to think about the wider architecture of how this would actually flow and fit in the overall operation.”

That experience shaped how Assembled approached its own rollout. The goal was not to make voice AI do the most. It was to make it do the right things well.

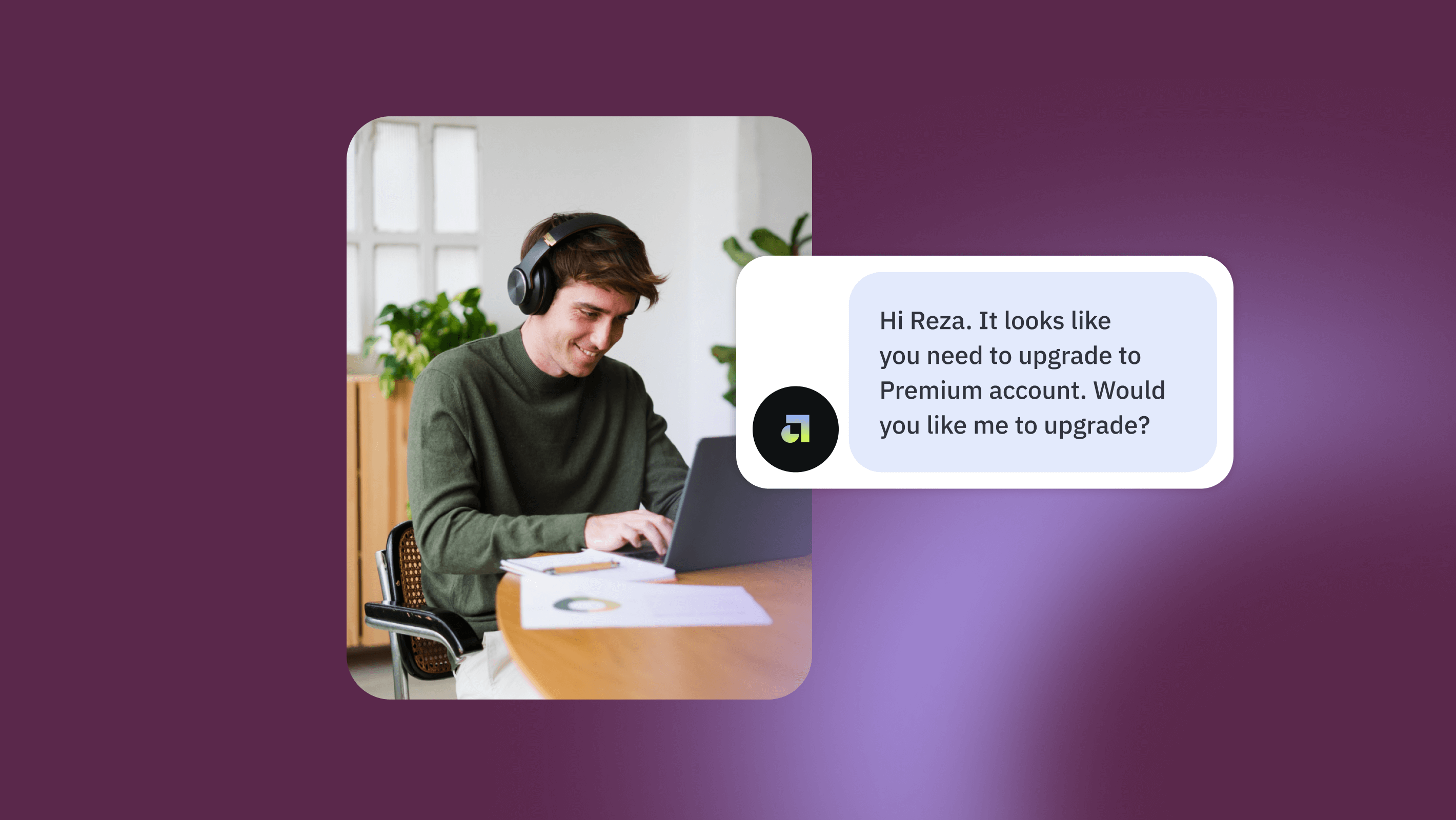

From there, the real questions were: What kinds of issues should voice AI handle on its own? Which ones should escalate immediately? What would count as a successful, self-contained voice AI interaction, and what should route to a human without pretending otherwise?

For Assembled (and many of our customers), the answer was to focus on Tier 1 contacts: recurring, transactional questions where speed and consistency matter, and where customers benefit from immediate help or a clear next step. More advanced troubleshooting, especially issues that require account-specific investigation, stayed out of scope.

That was a customer experience decision. It was also a staffing decision.

In support, scope and coverage are linked. Deciding what AI handles is part of deciding what humans need to be available for, when, and in what volume. If voice AI takes a certain class of routine contacts off the table, that changes the shape of the work the team needs to staff for. If it creates handoffs into another queue, that affects load there too. Those are not separate conversations.

That is one reason broad automation mandates tend to break down in the real world. They flatten support into a single bucket and treat more automation as inherently better. But good support operations do not work that way. They depend on boundaries.

In practice, an AI that does fewer things consistently earns more trust than one that tries to handle everything.

In voice, the handoff is part of the product

The rollout made one thing especially clear: the handoff is not a secondary detail, it's an essential part of the customer journey.

In email, a clunky transition can be annoying. In chat, it can feel slow. In voice, it is exposed immediately. A pause feels longer. Uncertainty feels sharper. Repetition is more noticeable. If the system cannot help and the next step is vague, the customer feels that friction in real time.

That is why the team spent so much time thinking about escalation paths before launch.

“We did have to design that handoff procedure to make sure that we don’t leave customers hanging,” Caitlyn said.

That meant deciding what belonged in a standard path and what should branch off immediately. It meant being clear about which issues really could be handled in-channel and which ones needed a different motion altogether. It also meant acknowledging that a good handoff is not just a technical fallback. It is part of what makes the whole system feel coherent.

There’s still no perfect knowledge base

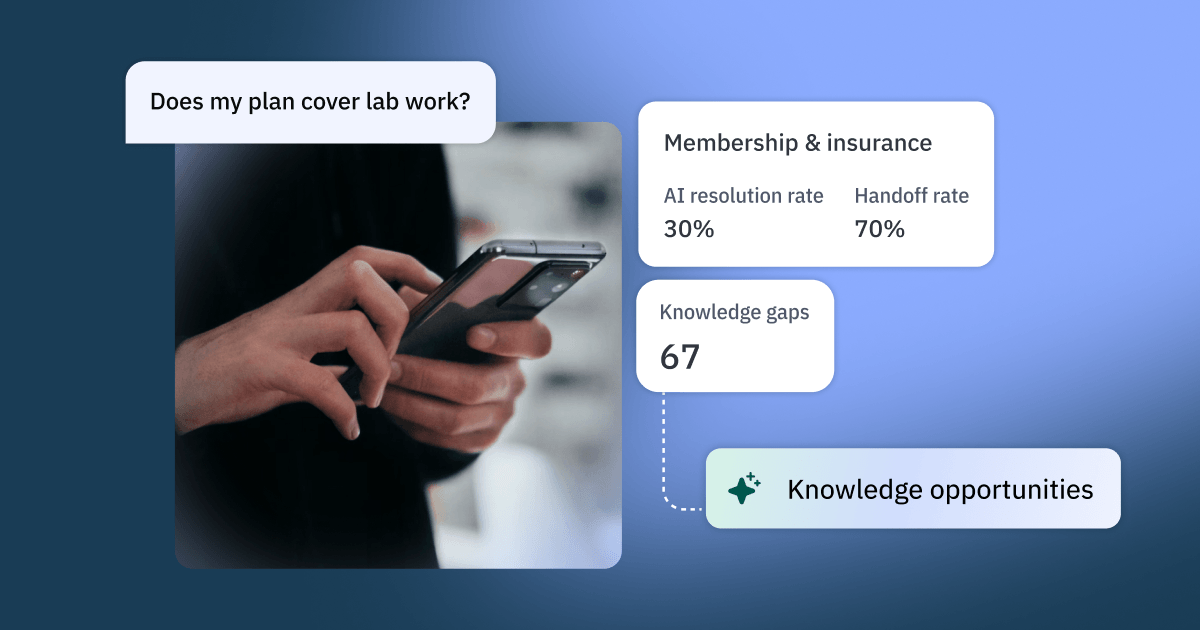

If voice made handoffs more visible, it also made a familiar problem harder to ignore: the knowledge base was never as complete as the team wanted it to be.

Thomas Miller, who has been closely involved in building and refining Assembled’s AI support workflows, described his role simply: identify recurring question types, help build the workflows that respond to them, then review and refine the guidance over time.

In practice, that work surfaced a problem many support teams will recognize.

“Prior to doing the work here, our knowledge base, especially the external knowledge base, was missing a lot of stuff,” Thomas said.

The problem wasn’t a total lack of documentation. It was that the documentation mostly covered the happy path. There might be a setup article for an integration, for example, but not enough guidance for the follow-up questions customers actually ask once the setup breaks, partially works, or runs into edge cases.

Thomas was candid about what that revealed about the team’s own operation. “Our support org really relied on tribal knowledge,” he said. “Now we’re trying to get much better at documenting this stuff.”

That admission matters partly because it is so common. Experienced support teams are often running on a deep reserve of memory, pattern recognition, and workarounds that live in people rather than in systems. Human agents can compensate for that, at least for a while. AI cannot do nearly as much with undocumented intuition.

“If there are some cases where it’s just like, hey, this is an integration that’s not documented at all, I would probably start with the knowledge base,” Thomas said. “That way, it’s going to help your email agent, chat agent, and voice agent all at once. And really fill in the gaps across the board. And, again, help your human agents as well.”

That is where a lot of teams get stuck. Once they realize how much AI depends on documentation, they start preparing for a “ready” state that never quite arrives. Knowledge is never finished, especially in a product that keeps changing.

Assembled’s own experience reinforced a more practical approach: start with a knowledge base that is good enough to support the first version, then use live AI interactions to reveal what is still missing. In some cases, workflows can help bridge those gaps by adding more structure or context around a specific interaction. But that does not remove the need for better documentation. It just makes the work more visible.

The real question is not whether to wait for perfect knowledge or launch without it. It is how to start with enough documentation to be useful, then keep improving the system once real conversations show you what is missing.

Getting it right takes iteration

As the team started building workflows, they discovered something else: a weak result was not always proof that a human was more suited to the task. Sometimes it was proof that the workflow, the prompt, or the knowledge architecture needed work.

Early on, Thomas said, the team defaulted to structures that were too rigid, more like literal decision trees than flexible guidance. That works better in very standardized environments. It works less well when customers phrase the same underlying issue in many different ways.

That matters even more in voice, where people do not speak the way they type. They use shorthand. They trail off. They say “gCal” instead of “Google Calendar,” or “my calendar” instead of the exact product term your team uses internally. They give partial context and expect the system to keep up.

So the challenge was not just writing better answers. It was building systems that could recognize the messier shape of real customer language.

“It definitely does take some practice to get good at writing these guidance steps for the AI agents,” Thomas said. “Sometimes it’s gonna take things literally.”

He gave one example from a Google Calendar-related workflow. The prompt told the AI agent to first identify which direction of the integration the customer was asking about. But even when the customer had already stated that plainly, the system kept asking for clarification. As Thomas described it, the AI was over-indexing on the instruction itself.

A human agent probably would not have made that mistake. The AI did.

Teams often see brittle behavior and conclude that AI cannot handle support complexity. Sometimes that conclusion is fair. But sometimes the more accurate takeaway is that the system is poorly designed for the task it has been given.

That is why iteration matters so much after launch. The first version is not the verdict. It is the beginning of the learning.

The next trap is workflow sprawl

One of the more practical watchouts Thomas raised had to do with overlap. As teams get better at building workflows, it becomes easier to build more of them. But if those workflows sit too close together, or cover adjacent issues without a clear enough distinction, the system can start triggering the wrong one for the wrong scenario.

“You don’t want to end up in a situation where you’ve built out a hundred workflows, but you’re accidentally triggering the wrong ones in the wrong cases,” Thomas said.

In other words, AI support does not just need automation. It needs architecture.

That means being intentional about when to create a new workflow and when to combine several related scenarios into one broader structure. It means watching for collisions between similar issue types. And it means recognizing that operational quality can degrade quietly if no one is responsible for monitoring that complexity as it grows.

That is not just a workflow design problem. It is an ownership problem.

A lot of teams are still structured as if AI is a layer that can be added to support without changing how support itself is owned. Agents handle tickets. Someone else builds workflows. Knowledge updates happen eventually. Improvements get made when someone has time. That model does not hold up especially well once AI is customer-facing.

AI systems drift. Gaps compound. Overlap accumulates. Performance gets uneven.

If no one owns the full loop, from what customers are asking to how the system is responding to what needs to change next, the operation gets worse quietly before it gets better intentionally.

At Assembled, workflow ownership is increasingly part of the support role itself. The people closest to recurring customer questions are also helping build, monitor, and improve the workflows that respond to them.

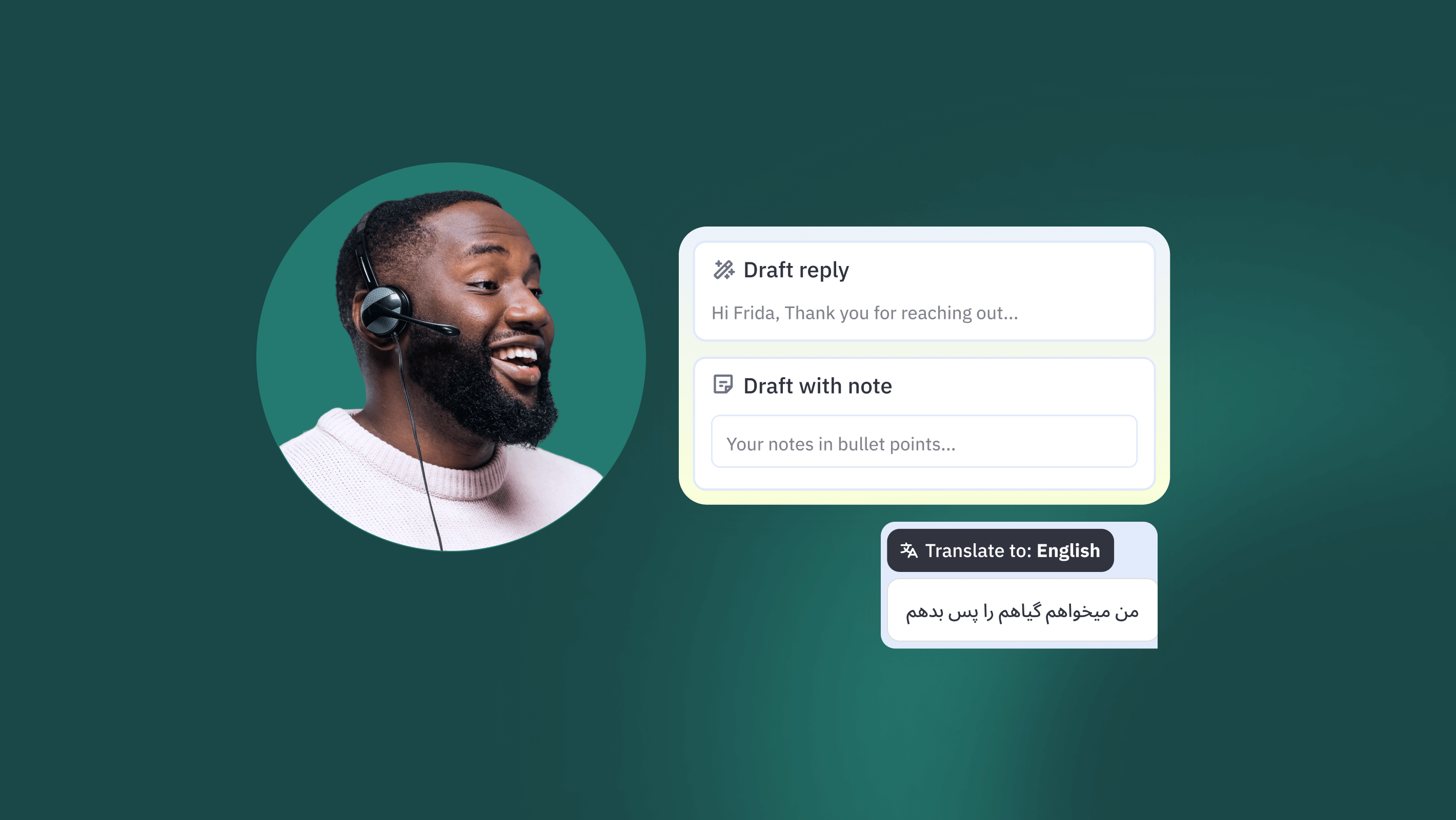

Support work is changing, but not disappearing

None of this points to a future with less need for support people. If anything, it points to a future where support judgment matters in different places.

At Assembled, one of the most notable shifts has been in who is expected to contribute to the system. Agents are not just expected to spot repeated customer questions. They are increasingly expected to help encode the answers, contribute workflows, and improve the support experience upstream.

That is a meaningful change. Historically, those kinds of improvements often sat with a separate support operations or systems team. AI collapses some of that distance. The people closest to the work are often the ones best positioned to improve how the system handles it.

Caitlyn put it simply: “Maybe they become editors and fact-checkers rather than typists.”

That line gets at something important. Good AI support does not remove the need for human expertise. It changes where that expertise creates value, shifting support work toward systems thinking, technical fluency, and human judgment.

And in that sense, support has been here before. The tools change. The channels evolve. IVRs happened. Macros happened. Chat happened. AI is a bigger shift than some of those, but it is not the first time support teams have had to adapt their craft to new technology. The strongest teams will do what strong support teams have always done: absorb the change, learn the system, and keep designing for the customer experience on the other side of it.

What our own rollout made clear

Putting our own voice AI into a real support queue made one thing clear: the hard part is not the model. It is the operation around it.

If AI is underperforming in your support operation, the answer is not always a smarter model. Sometimes it is a better-designed system.