3 things to look out for when choosing an AI copilot for customer support

If you’re evaluating an AI copilot for customer support, you’re probably hearing a lot of big promises.

Copilots are pitched as the fastest way to scale support: faster responses, happier agents, lower costs — all without sacrificing quality. And in theory, that’s exactly what they should do. But in practice, not every AI copilot is built to operate inside the real-world complexity of a support organization.

Support leaders don’t just need “AI.” They need a copilot they can trust — one that works alongside agents, respects operational guardrails, and delivers measurable improvements without introducing new risk. The wrong copilot can confuse agents, frustrate customers, and create more oversight work instead of less.

That's why choosing an AI copilot isn't about flipping a switch or chasing the most advanced model. It's about understanding how the copilot works, how it earns trust over time, and how clearly it proves its value.

The best AI copilots are built on three essential pillars: quality (responses you can trust), coverage (impact that actually matters), and control (transparency you can manage). Without all three working together, you're left with either unreliable automation, negligible impact, or a system you can't confidently scale. In this article, we'll walk through three things to look out for when evaluating an AI copilot for customer support — so you can ensure your investment delivers on all three.

Be wary of hasty AI copilot implementations

Think rolling out an AI copilot is just a flip of a switch? We don’t think so either. You wouldn’t expect a new support agent to master everything on day one, and the same should be true for a copilot that’s embedded directly in agent workflows.

Anyone selling an AI copilot for customer support should be able to clearly explain how that copilot earns trust over time — what it does on day one, how it expands responsibly, and how value compounds at each stage. Without that clarity, it’s easy to spend months burning through resources for little real return.

This is the phased approach we’ve taken with Assembled’s AI Copilot, an agent copilot and issue resolution engine powered by generative AI.

Right out of the box, Copilot starts by providing agents with instant answers pulled directly from your knowledge base.

Once teams are comfortable with the basics, Copilot begins auto-drafting responses that agents can review, tweak, and finalize.

Finally, Copilot can automate a meaningful portion of interactions using the trust and context built during the earlier phases of implementation.

By the time this rollout is complete, Copilot is confidently handling specific types of interactions end-to-end, freeing up human agents to focus on more complex, high-impact work.

A deliberate, phased approach ensures each stage delivers value while setting the foundation for more advanced automation.

This crawl-walk-run approach isn't just safer — it's smarter. Each phase generates real data about what works in your specific environment, which workflows are ready for automation, and where human judgment still adds the most value. That data becomes the foundation for expanding automation with confidence, not guesswork.

Demand full transparency of how the AI copilot operates

Trust isn’t given — it’s earned. And when an AI copilot is actively shaping agent responses and customer interactions, transparency becomes non-negotiable.

If you can’t see what your copilot is doing, or understand why it’s behaving a certain way, you can’t confidently put it in front of customers. The best AI copilots provide clear visibility into every part of their operation, from the answers surfaced to agents to the responses drafted or automated for customers.

Just as importantly, transparency enables control. Knowing when and why a copilot activates automation allows support leaders to set boundaries, intervene when needed, and ensure the system remains an asset rather than a liability as usage expands.

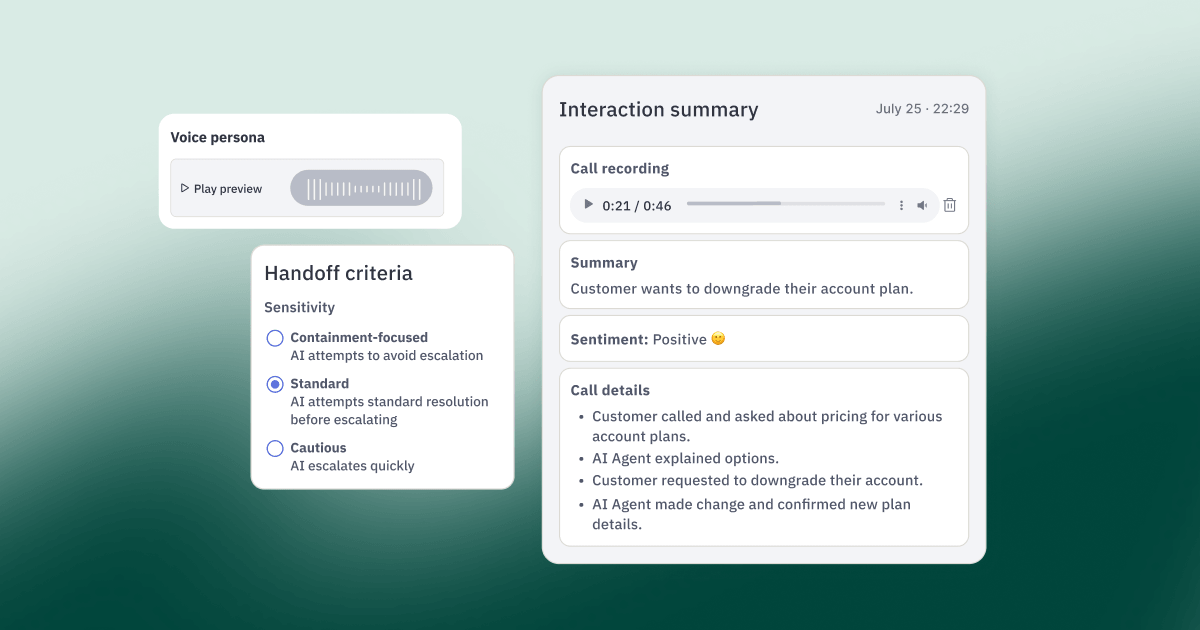

A strong agent copilot should also support regular quality checks. Reviewing AI-assisted interactions on an ongoing basis helps teams catch issues early, refine responses, and improve performance over time — without waiting for customer complaints to surface problems.

With Assembled’s AI Copilot, for example, users have full visibility into AI-generated answers during the early phases of implementation. This often surfaces gaps or inaccuracies in the knowledge base, which can be addressed immediately to improve answer quality. Teams can also see where agents modify auto-drafted responses, creating a clear feedback loop that builds confidence in more advanced automation down the line.

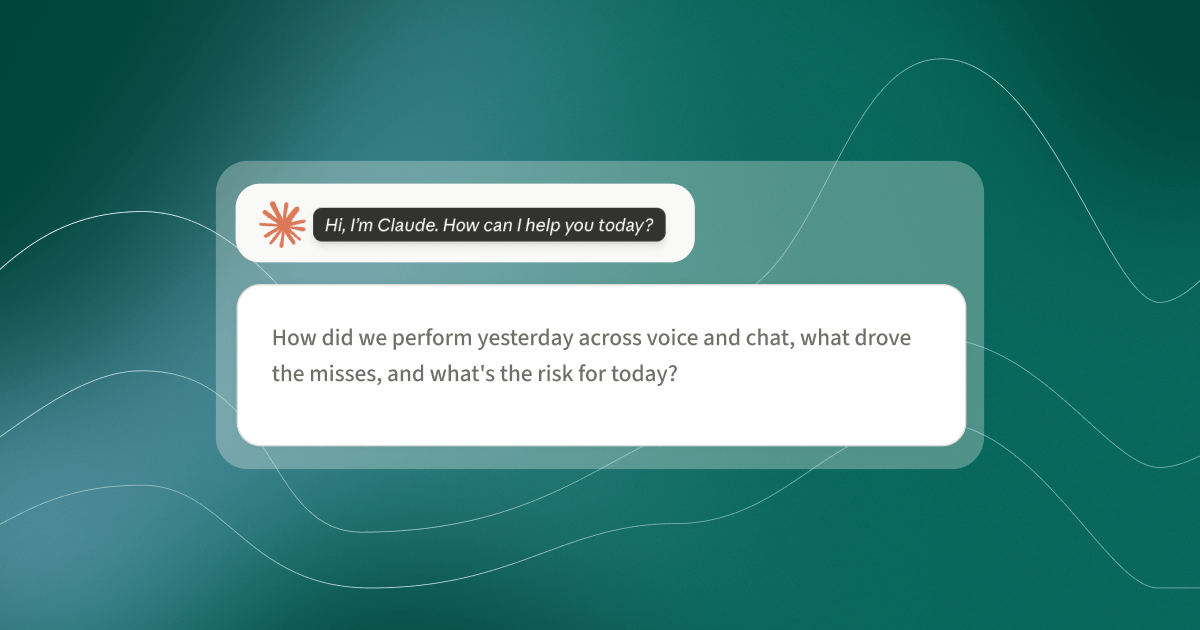

Beyond visibility into individual interactions, the most sophisticated AI copilots integrate transparency with broader operational intelligence. For example, when your AI copilot works alongside your workforce management system, you can see not just what AI is doing, but how it's affecting queue performance, agent capacity, and service levels in real time. This unified view ensures AI becomes a predictable, manageable part of your operation — not a black box that disrupts it.

By demanding transparency from your AI copilot, you’re not just deploying new technology — you’re integrating a system you can understand, guide, and trust. That’s what makes it possible to scale automation responsibly while improving both efficiency and customer experience.

Ensure clear, robust, and actionable reporting

The value of an AI copilot is only as strong as the improvements it delivers — and you can’t improve (or justify) what you can’t measure.

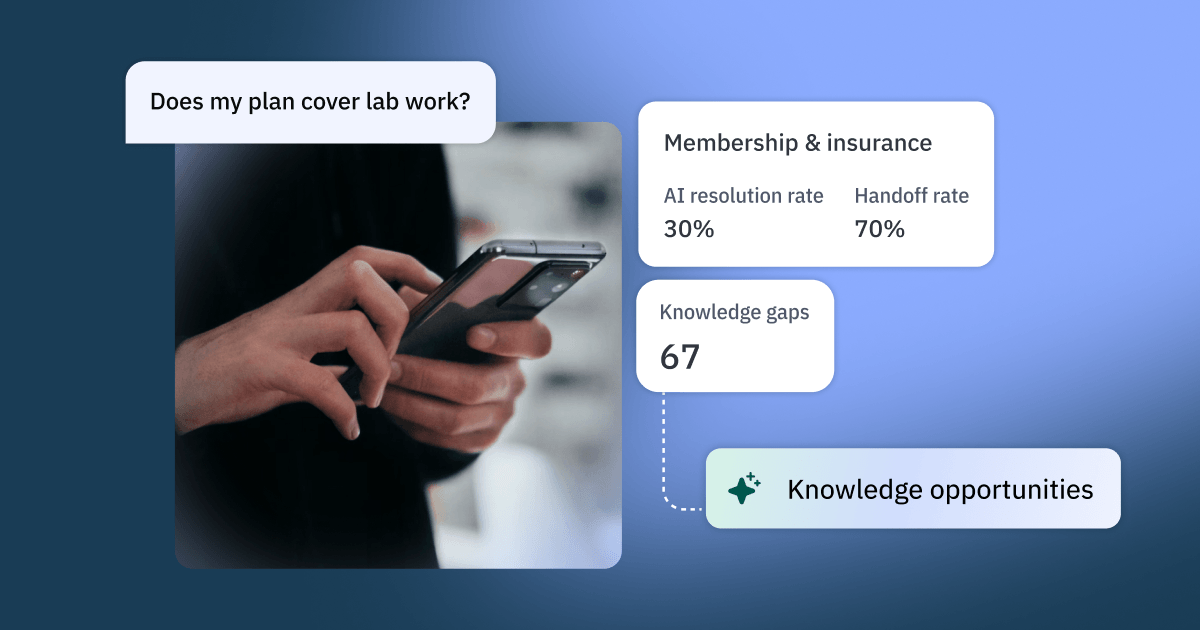

Yet many AI solutions treat reporting as an afterthought, offering vanity metrics like "messages sent" without connecting AI activity to actual business outcomes. The best AI copilots go further: they tie every AI action to operational metrics that matter — handle time, CSAT, resolution rate, and agent productivity — so you can make decisions based on impact, not just activity.

A sound AI copilot investment should come with reporting that goes beyond raw metrics. The goal isn’t just visibility, but clarity: reporting that helps you understand what the copilot is doing, where it’s helping, and where human intervention or refinement is still needed.

Strong reporting turns your AI copilot into an accountable system. It allows support leaders to evaluate performance, set guardrails around automation, and make confident decisions about when (and where) to expand its role.

With Assembled’s AI Copilot, for example, reporting is designed to help teams monitor operational impact in real time and act on what they see. Dashboards surface key signals around accuracy, adoption, and customer outcomes, making it easier to connect copilot behavior to business results.

Here's how Copilot helps teams keep a pulse on performance and accountability:

- Copilot Impact Report: Tracks adoption, usage, and performance across your team — showing which features agents are using, how Copilot affects handle time and CSAT, and where AI assistance is making the biggest difference.

- AI Quality Page: Provides a centralized place to review the accuracy and behavior of AI interactions, with evaluations covering reply correctness, knowledge retrieval accuracy, and style alignment — helping you identify where improvements are needed.

- Automations report: Links customer satisfaction scores directly to AI-generated responses, making it possible to assess how automated interactions are landing with customers.

- Auto drafts report: Highlights outcomes of AI-drafted responses, helping teams identify which queries are well-suited for copilot assistance and which still benefit from a human touch.

By demanding clear, actionable reporting from your AI copilot, you gain more than insight — you gain proof. Proof of impact, proof of value, and the confidence to scale automation responsibly without sacrificing customer experience.

Embrace customer support automation with confidence

Choosing the right AI copilot for customer support is about more than adopting new technology. It's about making a strategic investment that delivers quality you can trust, coverage that matters, and control you can maintain.

The most effective AI copilots are rolled out in deliberate phases (building quality at each stage), operate transparently (giving you the control to set boundaries), and clearly demonstrate their impact through actionable reporting (proving they're covering enough volume to justify the investment).

If you’re actively evaluating AI copilots, seeing how these principles show up in a real product can help clarify the differences between vendors. Assembled’s AI Copilot is built to support this kind of thoughtful, accountable automation — from day one through full-scale deployment.

Ready to see how it works in practice? Take a self-guided demo now.